Subscribe to get access

Read more of this content when you subscribe today.

Oracle strongly recommends to configure HugePages for servers with more than 8GB memory used by Oracle database(s).

Read more of this content when you subscribe today.

For 12c GI, cluster name is SCAN name by default with “Typical Install”, cluster name can only be customized in “Advanced installation”.

The cluster name is case-insensitive, must be unique across your enterprise, must be at least one character long and no more than 15 characters in length, must be alphanumeric, cannot begin with a numeral, and may contain hyphens (-). Underscore characters (_) are not allowed.

For 12c, the SCAN is the name of the cluster , If you configure a Standard cluster, and choose a Typical install.

In an Advanced installation, The SCAN and cluster name are entered in separate fields during installation. So you can choose and give cluster name only in “Advanced installation”.

Select cluster name carefully. After installation, you can only change the cluster name by reinstalling Oracle Grid Infrastructure.

Here are a couple of ways to find Oracle Clusterware cluster name:

a) Run “cemutlo” command under GI_HOME/bin.

$cd /u01/app/12.1.0.2/grid/bin

$./cemutlo -n

RACTEST-CLUSTER

b) It is under from $GI_HOME/cdata.

$ cd /u01/app/12.1.0.2/grid/cdata

$ls -l

total 1280

drwxrwxr-x 2 grid oinstall 4096 Sep 19 08:23 RACTEST-CLUSTER

drwxr-xr-x 2 grid oinstall 4096 Sep 18 12:36 racnode1

-rw------- 1 root oinstall 503484416 Sep 19 08:28 racnode1.olr

drwxr-xr-x 2 grid oinstall 4096 Sep 18 12:24 localhost

c) From crsconfig_params or cluster.ini file.

$cd /u01/app/12.1.0.2/grid/crs/install/ $ grep -i cluster_name crsconfig_params CLUSTER_NAME=RACTEST-CLUSTER $cd /u01/app/12.1.0.2/grid/install $cat cluster.ini [cluster_info] cluster_name=RACTEST-CLUSTER

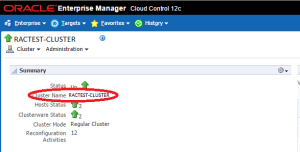

d) From OEM:

e) Run “olsnodes” command under GI_HOME/bin.

$cd /u01/app/12.2.0.1/grid/bin

$./olsnodes -c

RACTEST-CLUSTER

Using Oracle provided sshUserSetup.sh script to set up passwordless SSH user equivalence for RAC nodes. With “advanced” option, the user equivalence setting up will be bidirectional between racnode1 and racnode2.

In another post, we introduced how to manually set up ssh user equivalence between RAC nodes, before we install GI and RAC software.

Here we introduce sshUserSetup.sh Script to create user equivalence automatically. The script is coming from GI binary media.

Pay attention to option “advanced”. Without “advanced” option, the user equivalence setting up will be racnode1–>racnode2 only. With “advanced” option, the user equivalence setting up will be racnode1<–>racnode2 .

[] grid@racnode1:/u01/app/software/grid/sshsetup$ ./sshUserSetup.sh -user grid -hosts "racnode1 racnode2" -noPromptPassphrase -advanced -exverify The output of this script is also logged into /tmp/sshUserSetup_2016-09-14-14-59-01.log Hosts are racnode1 racnode2 user is grid Platform:- Linux Checking if the remote hosts are reachable PING racnode1 (10.11.12.13) 56(84) bytes of data. 64 bytes from racnode1 (10.11.12.13): icmp_seq=1 ttl=64 time=0.017 ms 64 bytes from racnode1 (10.11.12.13): icmp_seq=2 ttl=64 time=0.015 ms 64 bytes from racnode1 (10.11.12.13): icmp_seq=3 ttl=64 time=0.015 ms 64 bytes from racnode1 (10.11.12.13): icmp_seq=4 ttl=64 time=0.009 ms 64 bytes from racnode1 (10.11.12.13): icmp_seq=5 ttl=64 time=0.008 ms --- racnode1 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 4000ms rtt min/avg/max/mdev = 0.008/0.012/0.017/0.005 ms PING racnode2 (10.11.12.15) 56(84) bytes of data. 64 bytes from racnode2 (10.11.12.15): icmp_seq=1 ttl=64 time=1.71 ms 64 bytes from racnode2 (10.11.12.15): icmp_seq=2 ttl=64 time=0.081 ms 64 bytes from racnode2 (10.11.12.15): icmp_seq=3 ttl=64 time=0.094 ms 64 bytes from racnode2 (10.11.12.15): icmp_seq=4 ttl=64 time=0.084 ms 64 bytes from racnode2 (10.11.12.15): icmp_seq=5 ttl=64 time=0.077 ms --- racnode2 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 4001ms rtt min/avg/max/mdev = 0.077/0.409/1.710/0.650 ms Remote host reachability check succeeded. The following hosts are reachable: racnode1 racnode2. The following hosts are not reachable: . All hosts are reachable. Proceeding further... firsthost racnode1 numhosts 2 The script will setup SSH connectivity from the host racnode1 to all the remote hosts. After the script is executed, the user can use SSH to run commands on the remote hosts or copy files between this host racnode1 and the remote hosts without being prompted for passwords or confirmations. NOTE 1: As part of the setup procedure, this script will use ssh and scp to copy files between the local host and the remote hosts. Since the script does not store passwords, you may be prompted for the passwords during the execution of the script whenever ssh or scp is invoked. NOTE 2: AS PER SSH REQUIREMENTS, THIS SCRIPT WILL SECURE THE USER HOME DIRECTORY AND THE .ssh DIRECTORY BY REVOKING GROUP AND WORLD WRITE PRIVILEDGES TO THESE directories. Do you want to continue and let the script make the above mentioned changes (yes/no)? yes The user chose yes User chose to skip passphrase related questions. Creating .ssh directory on local host, if not present already Creating authorized_keys file on local host Changing permissions on authorized_keys to 644 on local host Creating known_hosts file on local host Changing permissions on known_hosts to 644 on local host Creating config file on local host If a config file exists already at /home/grid/.ssh/config, it would be backed up to /home/grid/.ssh/config.backup. Removing old private/public keys on local host Running SSH keygen on local host with empty passphrase Generating public/private rsa key pair. Your identification has been saved in /home/grid/.ssh/id_rsa. Your public key has been saved in /home/grid/.ssh/id_rsa.pub. The key fingerprint is: 77:c8:3b:d6:61:ff:21:40:f8:f0:79:d8:39:52:df:85 grid@racnode1 The key's randomart image is: +--[ RSA 1024]----+ | | | . . | | o . .E .| | .=.= o o| | S +B+= ..| | . =+o. | | + .... | | . . ...| | .| +-----------------+ Creating .ssh directory and setting permissions on remote host racnode1 THE SCRIPT WOULD ALSO BE REVOKING WRITE PERMISSIONS FOR group AND others ON THE HOME DIRECTORY FOR grid. THIS IS AN SSH REQUIREMENT. The script would create ~grid/.ssh/config file on remote host racnode1. If a config file exists already at ~grid/.ssh/config, it would be backed up to ~grid/.ssh/config.backup. The user may be prompted for a password here since the script would be running SSH on host racnode1. Warning: Permanently added 'racnode1,10.11.12.13' (RSA) to the list of known hosts. grid@racnode1's password: Done with creating .ssh directory and setting permissions on remote host racnode1. Creating .ssh directory and setting permissions on remote host racnode2 THE SCRIPT WOULD ALSO BE REVOKING WRITE PERMISSIONS FOR group AND others ON THE HOME DIRECTORY FOR grid. THIS IS AN SSH REQUIREMENT. The script would create ~grid/.ssh/config file on remote host racnode2. If a config file exists already at ~grid/.ssh/config, it would be backed up to ~grid/.ssh/config.backup. The user may be prompted for a password here since the script would be running SSH on host racnode2. Warning: Permanently added 'racnode2,10.11.12.15' (RSA) to the list of known hosts. grid@racnode2's password: Done with creating .ssh directory and setting permissions on remote host racnode2. Copying local host public key to the remote host racnode1 The user may be prompted for a password or passphrase here since the script would be using SCP for host racnode1. grid@racnode1's password: Done copying local host public key to the remote host racnode1 Copying local host public key to the remote host racnode2 The user may be prompted for a password or passphrase here since the script would be using SCP for host racnode2. grid@racnode2's password: Done copying local host public key to the remote host racnode2 Creating keys on remote host racnode1 if they do not exist already. This is required to setup SSH on host racnode1. Creating keys on remote host racnode2 if they do not exist already. This is required to setup SSH on host racnode2. Generating public/private rsa key pair. Your identification has been saved in .ssh/id_rsa. Your public key has been saved in .ssh/id_rsa.pub. The key fingerprint is: 8d:07:39:11:ee:f8:ac:cd:bf:00:c5:40:0a:d1:9c:ab grid@racnode2 The key's randomart image is: +--[ RSA 1024]----+ | o+ oo o. | | .+. + o | | .. B | | . + = | | . o S o | | E + . | | + | | + . | | . o.o. | +-----------------+ Updating authorized_keys file on remote host racnode1 Updating known_hosts file on remote host racnode1 Updating authorized_keys file on remote host racnode2 Updating known_hosts file on remote host racnode2 cat: /home/grid/.ssh/known_hosts.tmp: No such file or directory cat: /home/grid/.ssh/authorized_keys.tmp: No such file or directory SSH setup is complete. ------------------------------------------------------------------------ Verifying SSH setup =================== The script will now run the date command on the remote nodes using ssh to verify if ssh is setup correctly. IF THE SETUP IS CORRECTLY SETUP, THERE SHOULD BE NO OUTPUT OTHER THAN THE DATE AND SSH SHOULD NOT ASK FOR PASSWORDS. If you see any output other than date or are prompted for the password, ssh is not setup correctly and you will need to resolve the issue and set up ssh again. The possible causes for failure could be: 1. The server settings in /etc/ssh/sshd_config file do not allow ssh for user grid. 2. The server may have disabled public key based authentication. 3. The client public key on the server may be outdated. 4. ~grid or ~grid/.ssh on the remote host may not be owned by grid. 5. User may not have passed -shared option for shared remote users or may be passing the -shared option for non-shared remote users. 6. If there is output in addition to the date, but no password is asked, it may be a security alert shown as part of company policy. Append the additional text to the <OMS HOME>/sysman/prov/resources/ignoreMessages.txt file. ------------------------------------------------------------------------ --racnode1:-- Running /usr/bin/ssh -x -l grid racnode1 date to verify SSH connectivity has been setup from local host to racnode1. IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Please note that being prompted for a passphrase may be OK but being prompted for a password is ERROR. Wed Sep 14 14:59:32 AEST 2016 ------------------------------------------------------------------------ --racnode2:-- Running /usr/bin/ssh -x -l grid racnode2 date to verify SSH connectivity has been setup from local host to racnode2. IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Please note that being prompted for a passphrase may be OK but being prompted for a password is ERROR. Wed Sep 14 14:59:33 AEST 2016 ------------------------------------------------------------------------ ------------------------------------------------------------------------ Verifying SSH connectivity has been setup from racnode1 to racnode1 ------------------------------------------------------------------------ IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Wed Sep 14 14:59:33 AEST 2016 ------------------------------------------------------------------------ ------------------------------------------------------------------------ Verifying SSH connectivity has been setup from racnode1 to racnode2 ------------------------------------------------------------------------ IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Wed Sep 14 14:59:33 AEST 2016 ------------------------------------------------------------------------ -Verification from racnode1 complete- ------------------------------------------------------------------------ Verifying SSH connectivity has been setup from racnode2 to racnode1 ------------------------------------------------------------------------ IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Wed Sep 14 14:59:33 AEST 2016 ------------------------------------------------------------------------ ------------------------------------------------------------------------ Verifying SSH connectivity has been setup from racnode2 to racnode2 ------------------------------------------------------------------------ IF YOU SEE ANY OTHER OUTPUT BESIDES THE OUTPUT OF THE DATE COMMAND OR IF YOU ARE PROMPTED FOR A PASSWORD HERE, IT MEANS SSH SETUP HAS NOT BEEN SUCCESSFUL. Wed Sep 14 14:59:33 AEST 2016 ------------------------------------------------------------------------ -Verification from racnode2 complete- SSH verification complete.

In GI/RAC environment, passwordless SSH user equivalence is a prerequisite for Grid user and RAC user.

Here is an example of how to manually set up passwordless SSH user equivalence for multi-nodes cluster servers.

a) Check SSH is running.

$pgrep sshd

b) Login as grid or oracle user, and create .ssh directory under user HOME directory, and set right permission for this directory.

$ mkdir ~/.ssh $ chmod 700 ~/.ssh

c) Run below command to generate DSA public and private key. press Enter for all inputs. You can use same command to generate RSA ones.

$ /usr/bin/ssh-keygen -t dsa $ cd .ssh $ ls -ltr -rw-r--r-- 1 grid oinstall 398 Sep 14 12:06 id_dsa.pub -rw------- 1 grid oinstall 1675 Sep 14 12:06 id_dsa

d) Repeat steps a) through c) on other nodes .

e) Add the DSA public key to the authorized_key file on node1

$ cat id_dsa.pub >> authorized_keys $ ls

f) Copy authorized_key file to node 2 :

$ scp authorized_keys racnode2:/home/grid/.ssh/

g) Add public key of user grid on node 2 to file authorized_key:

$cat id_dsa.pub >> authorized_keys

h) Copy authorized_key file back to node 1 :

$ scp authorized_keys racnode1:/home/grid/.ssh/

I) Test the user equivalency:

$ssh racnode1 date $ssh racnode1-vip date $ssh racnode2 date $ssh racnode2-vip date ... ...

It is a bug which can be ignored. Attention is required to be paid if “runcluvfy.sh” and “cluvfy” have different results.

“runcluvfy.sh” pre-check running shows WARNING about /dev/shm, but “cluvfy” does not complain.

$ runcluvfy.sh stage -pre crsinst -n racnode1,racnode2 -verbose .... ... .. Daemon not running check passed for process "avahi-daemon" Starting check for /dev/shm mounted as temporary file system ... WARNING: The size of in-memory file system mounted at /dev/shm is "259072" megabytes which does not match the size in /etc/fstab as "0" megabytes The size of in-memory file system mounted at /dev/shm is "259072" megabytes which does not match the size in /etc/fstab as "0" megabytes Check for /dev/shm mounted as temporary file system passed .... ... .. . Pre-check for cluster services setup was unsuccessful on all the nodes.

$cluvfy stage -pre crsinst -n racnode1,racnode2 -verbose

....

...

..

.

Daemon not running check passed for process "avahi-daemon"

Starting check for /dev/shm mounted as temporary file system ...

Check for /dev/shm mounted as temporary file system passed

Starting check for /boot mount ...

Check for /boot mount passed

Starting check for zeroconf check ...

Check for zeroconf check passed

Pre-check for cluster services setup was successful on all the nodes.

According to Doc (ID 1918620.1) , this WARNING can be ignored.

|

APPLIES TO:Oracle Database – Enterprise Edition – Version 12.1.0.2 to 12.1.0.2 [Release 12.1] SYMPTOMS12.1.0.2 OUI/CVU (cluvfy or runcluvfy.sh) reports the following warning: WARNING: The size of in-memory file system mounted at /dev/shm is “24576” megabytes which does not match the

OR

PRVE-0426 : The size of in-memory file system mounted as /dev/shm is “74158080k” megabytes which is less than the required size of “2048” megabytes on node “”

/dev/shm setting is default so no size specified is in /etc/fstab: $ grep shm /etc/fstab

tmpfs /dev/shm tmpfs defaults 0 0

/dev/shm has OS default setting: $ df -m | grep shm

tmpfs 7975 646 7330 9% /dev/shm

CAUSEDue to unpublished bug 19031737 SOLUTIONSince the size of /dev/shm is bigger than 2GB, the warning can be ignored. |